Rapport-Aware Peer Tutor: Designing a virtual peer tutor to build rapport and support student learning

Overview

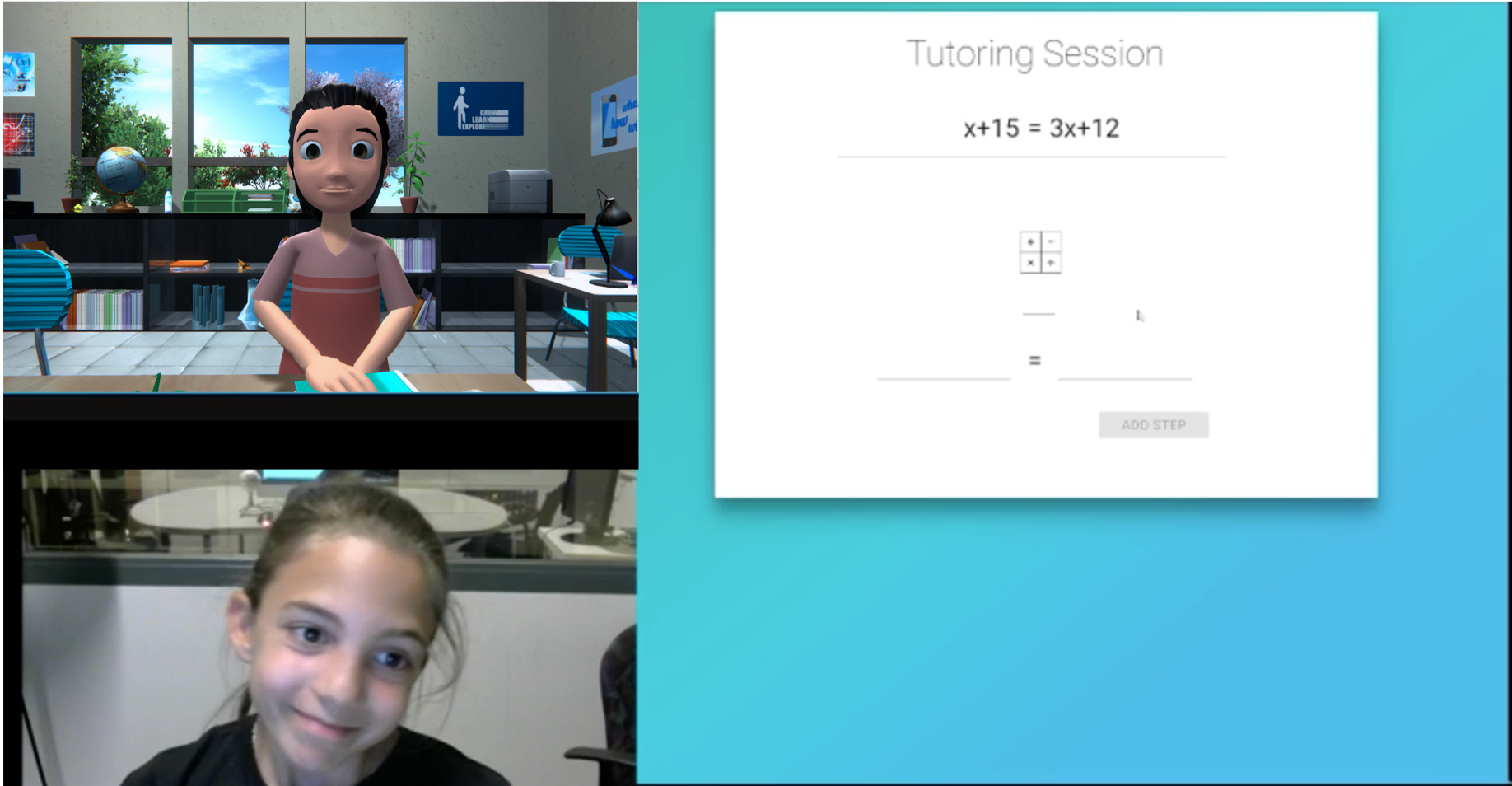

In the 21st century, students’ future success now hinges on their socio-emotional skills, such as their ability to communicate and collaborate effectively. The RAPT project, or “Rapport-Aware Virtual Peer Tutor”, unlike most educational technologies, has developed a virtual partner that students can collaborate with, and which responds to, and can help foster, students’ socio-emotional awareness.

Individual instruction from a private tutor has been shown to be the most effective form of learning, but, unfortunately, not every student has access to a private tutor. In fact, often the students who most need individualized instruction are the least likely to receive it. To address this, “intelligent tutoring systems” have been developed which provide personalized, adaptive instruction and feedback for a variety of domains. However, decades of research on learning has demonstrated that learning is about more than simply providing the right exercises to students.

Learning is fundamentally a social endeavor, where the relationship that students build with their teachers and fellow learners can contribute to their motivation to learn and feelings of belonging, their persistence in the face of failure, and ultimately, improving their learning outcomes. Intelligent tutoring systems, with their focus on the cognitive needs of students, often leave unaddressed the critical challenge of supporting the social relationships that are essential to learning.

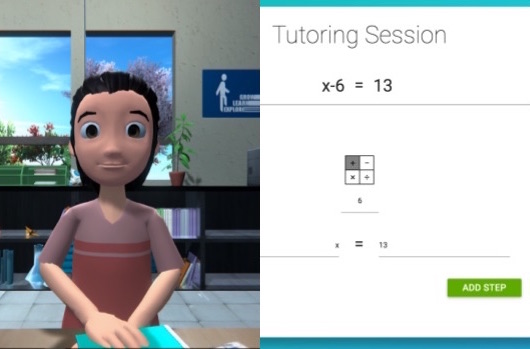

To address this gap, we have developed the first “Rapport-Aware Virtual Peer Tutor” (RAPT). We do this using cutting edge socially-aware artificial intelligence, developed in the ArticuLab at Carnegie Mellon University. Our system models students’ knowledge as well as the rapport that develops between students and their virtual partner over time. It then reasons about the most pedagogically and socially beneficial thing to say, and generates the most appropriate response to maximize students’ learning and the rapport between students and the virtual agent.

We are currently running a study with 6th-8th grade students to evaluate the impact of this rapport-aware virtual tutor on students’ self-efficacy, motivation, engagement, and their Algebra knowledge.

You can find a 1-page handout of this project here.

Motivation

One of the most interesting, and least understood, fields of behavioral science involves the social substructure of daily life: friendship, politeness, impoliteness, relationship formation, and rapport. We all know that feeling of “getting along” or “clicking” with someone, and how that rapport builds as a relationship deepens, but there are few comprehensive theories about what that rapport-building process is and the mechanisms by which it takes place.

And yet, it has been shown that rapport plays an important role in everyday life: people learn more from teachers and peers with whom they feel rapport, have improved therapeutic benefits from therapists with whom they feel rapport, are more honest and more likely to complete surveys when they feel rapport with the interviewer, and so on. As virtual agents and AI take on an increasingly important and ubiquitous role in our lives, we believe that these systems should know how to build rapport so as to better support their users over time.

We know from research in the Learning Sciences that intelligent tutoring systems can help students learn, often vastly improving on learning in traditional, lecture-based classrooms [Kulik and Fletcher, 2016]. Furthermore, we know that when classroom peers collaborate on a learning project, those students who are friends collaborate more effectively than those students who are not friends [Azmitia and Montgomery, 1992].

When we combine these observations together, we conclude that a virtual peer tutor that can develop rapport with a student – or even engage in reciprocal peer tutoring (i.e. teaching and being taught by them) – may be of great use in supporting learning. Imagine a student who has rapport with their virtual tutor who takes more risks in learning, who asks for deeper explanations when they don’t understand, and who is more motivated to continue learning with their new virtual peer when they are frustrated. This is the system we are working towards.

Methodology

Iterative Research Methodology

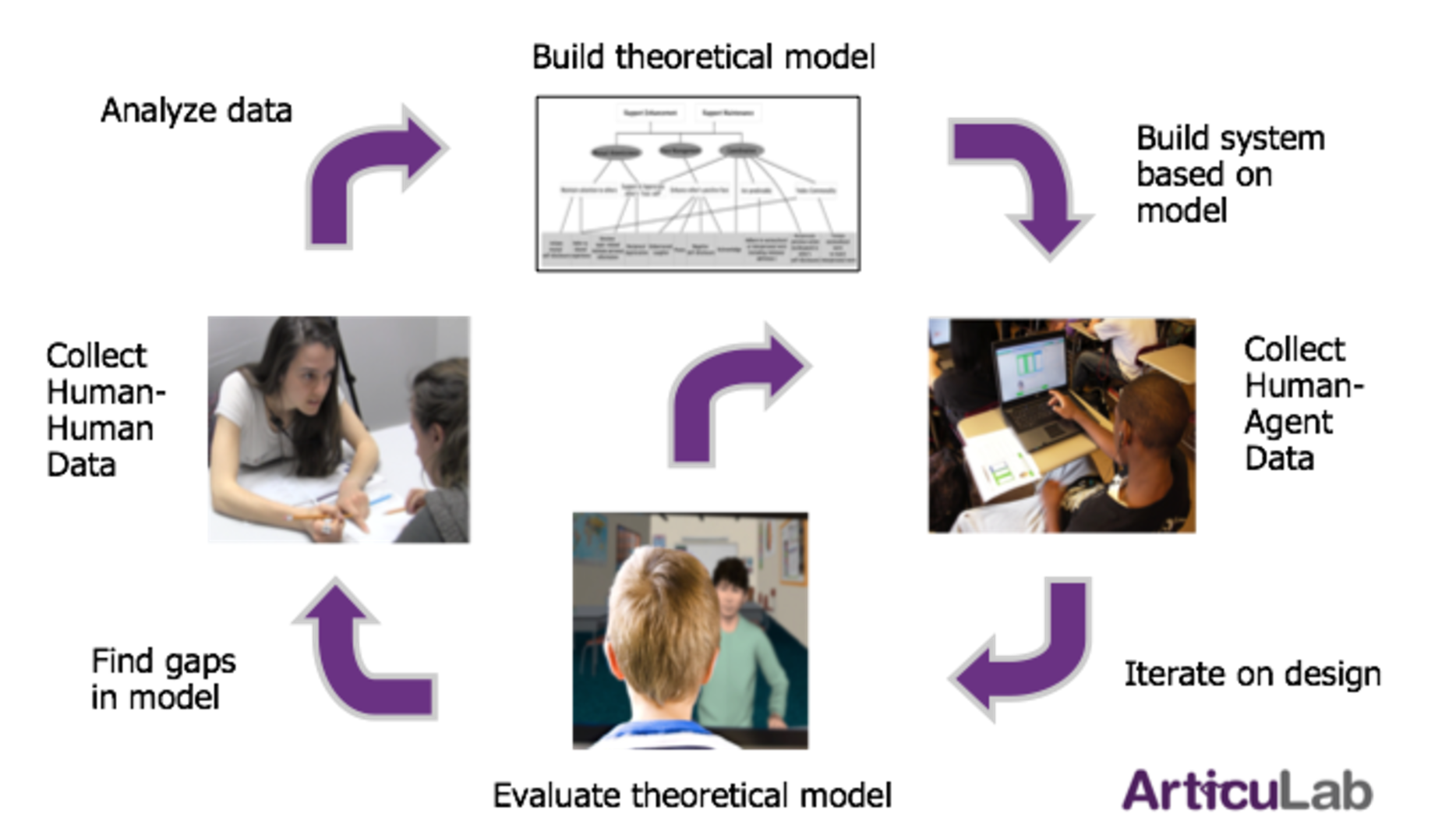

Here in the Articulab, we follow an iterative experimental design methodology in which we study human behavior in order to build a theory, design a virtual agent that can instantiate and test that theory, and iterate on both the theory and the design of the agent. We draw on a variety of empirical research methodologies and use both qualitative, quantitative, and computational means to analyze our data for particular verbal and nonverbal behaviors of interest. We then use those analyses to develop and iterate on a theoretical model of rapport and its impact on learning.

To investigate how particular verbal and nonverbal behaviors serve social and pedagogical goals and contribute to rapport-building and learning, we have built a dialogue system to implement that theory as a computational model, using an embodied conversational agent as the front-end interface to interact with the students. We then conduct empirical studies of the human-agent interactions, to investigate particular hypotheses about our theory of rapport and contribute to a more robust theory of rapport-building in learning.

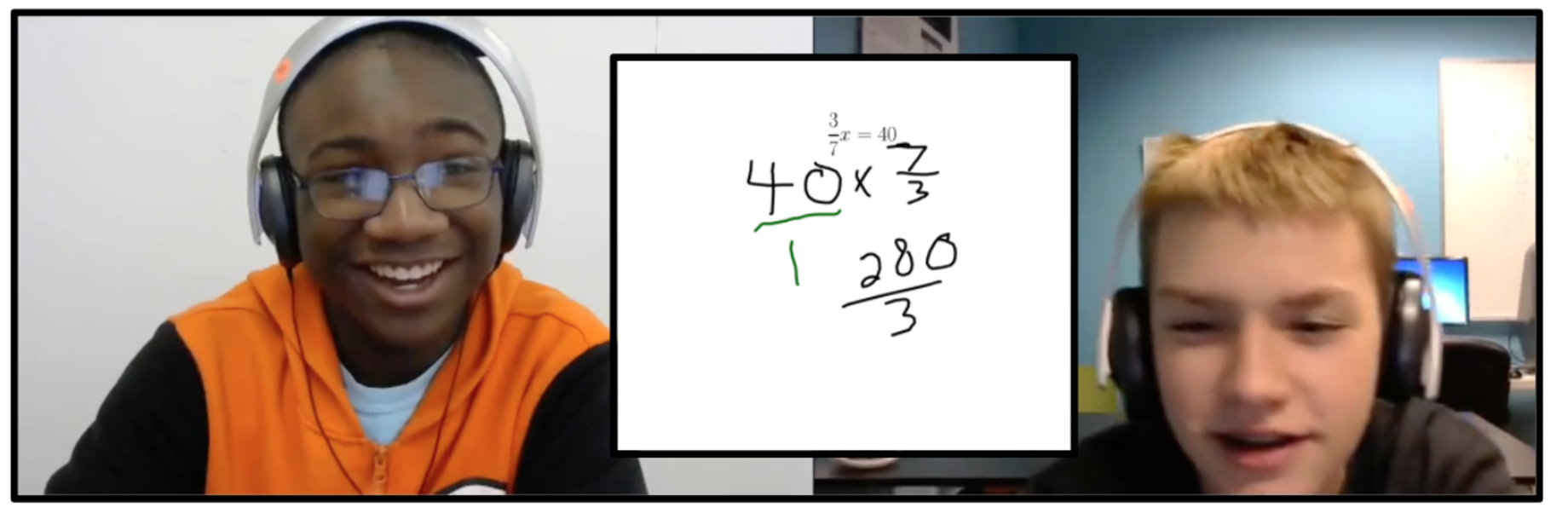

Data Collection

In order to train our “machine-learning” artificial intelligence components of our virtual agent, we collect data from pairs of students tutoring each other. We collected data from 22 dyads of middle and early high school students tutoring each other in linear algebra over two weekly hour-long sessions. We had the students teach each other over Skype, so that the front-facing cameras would be better able to capture the students’ facial expressions, to aid in automatic detection and extraction of the nonverbal behaviors that contribute to and signal higher rapport, using the OpenFace toolkit for nonverbal behavior analysis.

We then used a combination of human annotation and machine learning natural language classifiers to identify the tutoring and learning strategies used by each student, to understand how students’ use of those strategies change as the rapport develops between the students.

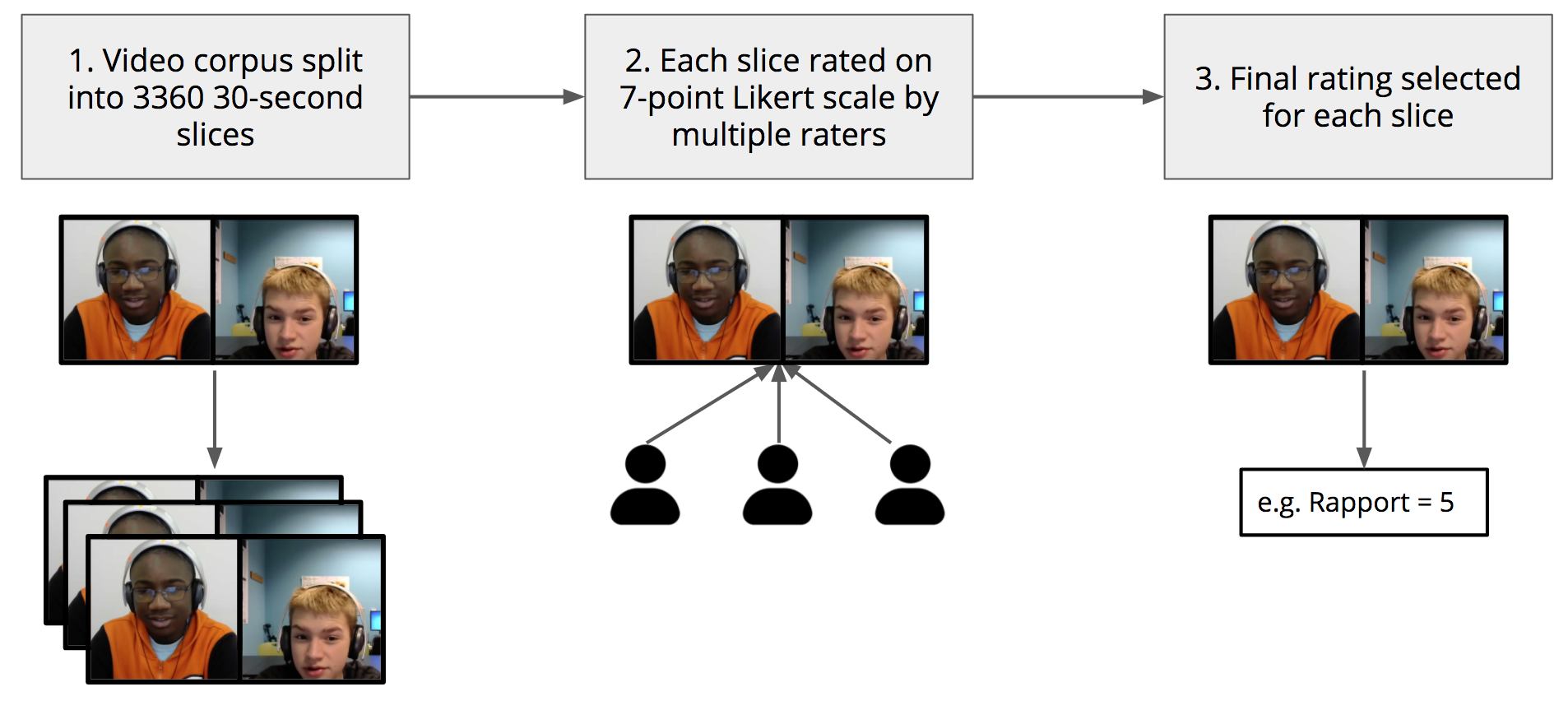

Finally, we obtained high-frequency ratings of the rapport level between participants every thirty seconds, using third-party observers in a crowd-sourcing approach (Sinha and Cassell, 2015).

Crowdsourced Thin-Slice Rapport Rating Process

See here for more detail on previous studies of peer tutoring from the Articulab.

Theory

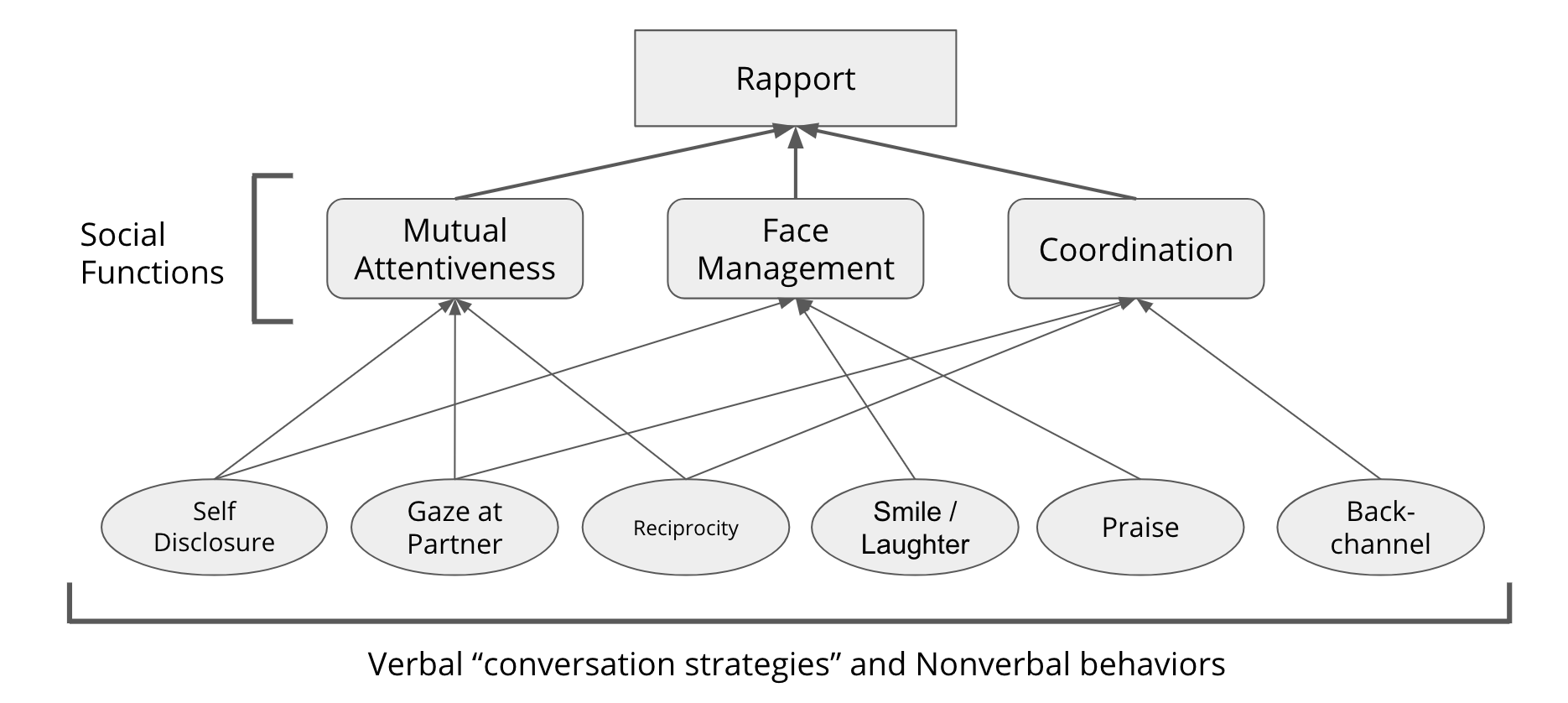

Based on the data analysis described above (and in more detail here), as well as a thorough investigation into prior literature from the social sciences on the components that make up the experience of rapport, the way people assess rapport in others, and the goals and strategies people use to build, maintain and destroy rapport. we propose a model for rapport enhancement, maintenance, and destruction in human-human and human-agent interaction. In Spencer-Oatey’s (Spencer-Oatey, 2005) perspective, each of these tasks requires management of face, which, in turn, relies on behavioral expectations, and interactional goals. Our data support the tremendous importance of face, as the teens alternately praise and insult one another, all the while hedging their own positive performance on the algebra task in order to highlight the performance of the other.

The data also contain numerous examples of mutual attentiveness and coordination as input into rapport management. Our model posits a tripartite approach to rapport management, comprising mutual attentiveness, coordination, and face management (Zhao et al,. 2014; Xu and Cassell, 2017)

Theoretical Model of Rapport Development

Architecture

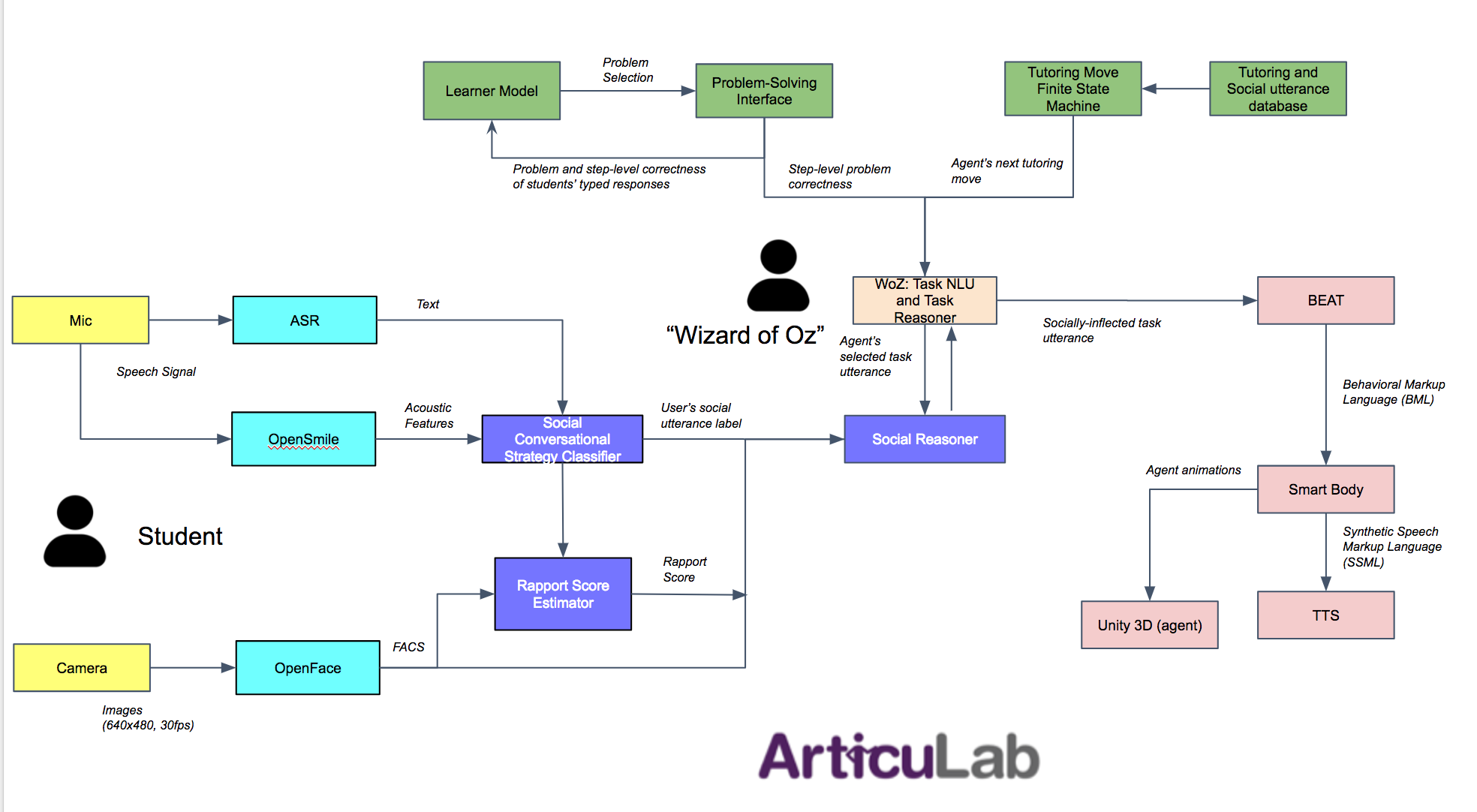

Having proposed a theoretical framework for rapport management, we have also developed a computational architecture that allows virtual agents to enhance, maintain, or even destroy long-term rapport with their users (Papangelis et al.,2014), and have implemented that architecture for a virtual tutoring agent in the RAPT project, as well as in a “Socially-Aware Robot Assistant”, in the SARA project (Matsuyama et al., 2016).

System Architecture for Rapport-Aware Virtual Tutor

Specifically, the RAPT system architecture contains the following modules:

- The computational model of rapport: The computational model is the first to explain how humans in dyadic interactions build, maintain, and destroy rapport through the use of specific conversational strategies that function to fulfill specific social goals, and that are instantiated in particular verbal and nonverbal behaviors (Zhao et al., 2014).

- Conversational strategy classification: The conversational strategy classifier can recognize high-level language strategies closely associated with social goals through training on linguistic and acoustic features associated with those conversational strategies in a test set (Zhao, Sinha, Black and Cassell ( SIGDIAL 2016)).

- Rapport level estimation: The rapport estimator estimates the current rapport level between the user and the agent using temporal association rules (Zhao, Sinha, Black and Cassell (IVA 2016 Best Student Paper); Madaio, Lasko, Ogan, Cassell (EDM 2017)).

- Social reasoning: The social reasoner outputs a social conversational strategy that the system must adopt in the current turn. The reasoner is modeled as a spreading activation network (Romero, Zhao, Matsuyama, Cassell (IJCAI 2017) ).

- Natural language and nonverbal behavior generation: The natural language generation module expresses conversational strategies in specific language and associated nonverbal behaviors, and they are performed by a virtual agent (Cassell, et al., 2001).

Social Conversational Strategy Classifier

We have implemented a conversational strategy classifier to automatically recognize the user’s social conversational strategies – particular ways of talking, that contribute to building, maintaining or sometimes destroying a budding relationship. These include self-disclosure (SD), questions to elicit self-disclosure (QE), reference to shared experience (RSD), praise (PR), violation of social norms (VSN), and back-channel (BC). By including rich contextual features drawn from verbal, visual and vocal modalities of the speaker and interlocutor in the current and previous turns, we can successfully recognize these dialogue phenomena with an accuracy of over 80% and with a kappa of over 60%.

Rapport Estimator

We use the framework of temporal association rule learning to perform a fine-grained investigation into how sequences of interlocutor behaviors signal high and low interpersonal rapport. The behaviors analyzed include visual behaviors such as eye gaze and smiles, and both social and tutoring-related verbal conversational strategies, such as self-disclosure, social norm violation, praise, feedback, hints, instructions, and metacognitive reflection. We developed a forecasting model involving two-step fusion of learned temporal associated rules.

The estimation of rapport comprises two steps: in the first step, the approach is to learn the weighted contribution (vote) of each temporal association rule in predicting the presence/absence of a certain rapport state (via seven random-forest classifiers); in the second step, the intuition is to learn the weight corresponding to each of the binary classifiers for the rapport states, in order to predict the level of rapport (via multi-class classification model, or “Support Vector Machine”). Ground truth for the rapport state was obtained by having naive annotators rate the rapport between two interactants in the teen peer-tutoring corpus for every 30 second slice of an hour long interaction (those slices were randomized in order before being presented to the annotators so that ratings were of rapport states and not rapport deltas).

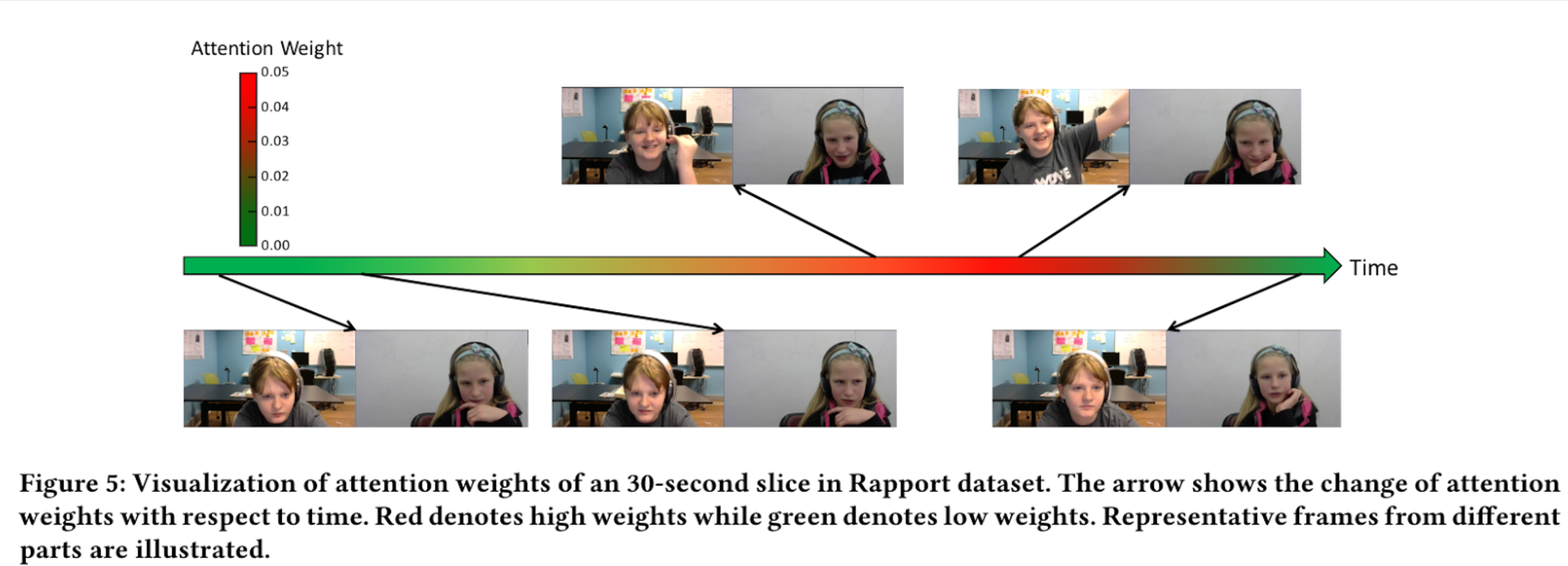

We are also currently experimenting with a different machine learning approach, using “temporally-selective attention models” in a neural network ensemble model. See Yu et al., (2017) for more details.

Temporally-Selective Attention Model for Rapport Estimation

Social Reasoner

The social reasoner is designed as a spreading activation model – a behavior network consisting of activation rules that govern which conversation strategy the system should adopt next. Taking as inputs the system’s intentions (e.g. “negative_feedback”, “provide_hint”), the history of the user’s conversational strategies, select non-verbal behaviours (e.g. head nod and smile) and the current rapport level, the activation energies will be updated, the Social Reasoner selects the system’s next conversational strategy, and sends both the system’s intent and conversational strategies to the NLG. The activation pre-conditions of the behavior network are extracted from the annotations carried out on the peer-tutoring corpus.

Verbal and Nonverbal Language Generation

Given the system’s intention (which includes the current conversational phase, the system intent, and the conversational strategy) these modules generate sentence and behavior plans. The Natural Language Generator (NLG) selects a certain syntactic template associated with the system’s intention from the sentence database. The templates are filled with content items. A generated sentence plan is sent to the BEAT (Behavior Expression Animation Toolkit), a nonverbal generation generator, and BEAT generates a behavior plan in the BML (Behavior Markup Language) form. The BML is then sent to SmartBody, which renders the required non-verbal behaviours on the Embodied Conversation Agent.

Socially-Aware Technologies: The Moral of the Story

The mission of our research is to study human interaction in social and cultural contexts, and use the results to implement computational systems that, in turn, help us to better understand human interaction, and to improve and support human capabilities in areas that really matter. As technologists, and as citizens of the world, it is our choice whether we build killer robots or robots that collaborate with people, our choice whether we seek to obviate the need for social interaction, or ensure that social interaction and interpersonal closeness are as important – and accessible – today as they have always been.

We will continue to iteratively analyze our data and use the findings to update our theoretical framework which, in turn, allows us to innovate the computational model and system architecture for a rapport managing virtual peer. We intend to use this system to contribute to a more robust understanding of the ways that social factors between learners, such as their rapport, improve their learning process, to allow every student to have the benefits of learning from and with a tutor with whom they have a close interpersonal bond.

Members

- Principal Investigators

- Justine Cassell

- Amy Ogan

- Louis-Philippe Morency

- ArticuLab Members

- Michael Madaio

- Yoichi Matsuyama

- Robert Huerbin

- David Slebodnick

- Tanmay Sinha

- Ran Zhao

- Research Assistants: Michelina Astle, Jake Beley, Rishabh Chatterjee, Naomi Eigbe, Alvaro Granados, Rae Lasko, Tiffany Lee, Sarah Lehman, Jeffrey Li, William Liu, Kun Peng, Lynnette Ramsay, Anne Widom, Caroline Wu, Zian Zhao

Related Publications

Madaio, M. A., Cassell, J., & Ogan, A. (2017, June). The Impact of Peer Tutors’ Use of Indirect Feedback and Instructions. In Proceedings of the Twelfth International Conference of Computer-Supported Collaborative Learning, 2017. [*Best Student Paper*] [pdf]

Madaio, M., Ogan, A., Cassell, J. (2017). Using Temporal Association Rule Mining to Predict Dyadic Rapport in Peer Tutoring. In Proceedings of the 10th International Conference on Educational Data Mining, 2017. [pdf]

Madaio, M., Ogan, A., & Cassell, J. (2016, June). The Effect of Friendship and Tutoring Roles on Reciprocal Peer Tutoring Strategies. In International Conference on Intelligent Tutoring Systems (pp. 423-429). Springer International Publishing. [pdf]

Matsuyama, Y., Bhardwaj, A., Zhao, R., Romero, O., Akoju, S., & Cassell, J. (2016, September). Socially-aware animated intelligent personal assistant agent. In Proceedings of 17th Annual SIGDIAL Meeting on Discourse and Dialogue. [pdf]

Sinha, T., & Cassell, J. (2015, November). We click, we align, we learn: Impact of influence and convergence processes on student learning and rapport building. In Proceedings of the 1st Workshop on Modeling INTERPERsonal SynchrONy And infLuence (pp. 13-20). ACM. [pdf]

Sinha, T., Zhao, R., & Cassell, J. (2015, November). Exploring socio-cognitive effects of conversational strategy congruence in peer tutoring. In Proceedings of the 1st Workshop on Modeling INTERPERsonal SynchrONy And infLuence (pp. 5-12). ACM. [pdf]

Yu, H., Gui, L., Madaio, M., Ogan, A., & Cassell, J. (2017). Temporally Selective Attention Model for Social and Affective State Recognition in Multimedia Content. In Association for Computing Machinery Conference on Multimedia, 2017. [pdf]

Zhao, R., Sinha, T., Black, A., & Cassell, J. (2016, September) , Socially-Aware Virtual Agents: Automatically Assessing Dyadic Rapport from Temporal Patterns of Behavior. In Proceedings of the 16th International Conference on Intelligent Virtual Agents (IVA). [*Best Student Paper*] [pdf]

Zhao, R., Sinha, T., Black, A., & Cassell, J. (2016, September) , Automatic Recognition of Conversational Strategies in the Service of a Socially-Aware Dialog System. In Proceedings of 17th Annual SIGDIAL Meeting on Discourse and Dialogue. [pdf]

Acknowledgments

RAPT (the Rapport-Aware Peer Tutor) is a collaboration among Professor Justine Cassell and her research group at Carnegie Mellon University, Amy Ogan, and Louis-Philippe Morency.

The research reported here was supported, in whole or in part, by the National Science Foundation Cyberlearning Award No. 1523162, and the Institute of Education Sciences, U.S. Department of Education, through Grant R305B150008 to Carnegie Mellon University. The opinions expressed are those of the authors and do not represent the views of the Institute or the U.S. Department of Education.

Computing Power provided in part by DroneData